Normal View Dyslexic View

The role of artificial intelligence in diagnostic medical imaging and next steps for guiding surgical procedures

Funding: This work was supported by the French Agence Nationale de la Recherche (ANR) under the project references ANR-22-CE17-0019-01 and ANR-10-IAHU-02, as well as French state funds managed within the “Plan Investissements d’Avenir”.

Summary

Surgery is witnessing the transformation of operating theatres into smart infrastructures with interconnected cutting-edge devices, where numerous highly specialised professionals collaborate for the benefit of patients. Consequently, along with the continuously generated large amount of data and the advances in technology, Surgical Data Science has evolved, an interdisciplinary field that aims to improve safety and outcomes of modern surgery with artificial intelligence (AI) algorithms integrating multimodal medical data. AI-based assistance systems have the potential to automate diagnosis from various medical imaging studies currently performed by radiologists, in a time-critical manner. The accuracy of such diagnostic and assistive software must match or exceed that of the healthcare professional to be clinically trustworthy, while being fast, cost-effective, widely accessible, and unbiased. Hence, clinically useful integration of smart assistance by AI tools for diagnostic, interventional and intraoperative imaging requires transdisciplinary collaborative exchange between medical and computer science professionals, ideally in shared workspaces.

Diagnostic medical imaging and AI assistance

Clinicians rely on medical imaging to determine treatment strategies and surgical planning. The available technologies provide ever higher resolution, enable three-dimensional reconstructions, and increasingly include functional imaging. Examples of anatomical imaging modalities are ultrasound (US), X-Ray, fluoroscopy, computed tomography (CT), and magnetic resonance imaging (MRI). Nuclear medicine hybrid scanning techniques fuse functional imaging, e.g., single-photon emission computed tomography (SPECT) or positron emission tomography (PET), with anatomical imaging, to obtain SPECT/CT and PET/CT, which are important tools in cancer assessment.

While computer-aided diagnosis (CAD) systems have been developed since the 1960s it is quite recently (2010s) with the explosion of Deep Learning (DL) methods that these systems started achieving unprecedented levels of diagnostic accuracy matching or even exceeding the performance of human experts 1. AI applications have the capacity to provide in-depth analysis of various imaging modalities. Such AI algorithms can be trained to distinguish between normal and abnormal findings, thus automating the detection of pathologies or lesions at an early stage, monitoring of existing diseases, and uncovering information that is invisible to the human eye 2. An up-to-date list of commercially available AI tools related to radiology and other imaging domains is accessible on the American College of Radiology Data Science Institute webpage AI Central 3. https://aicentral.acrdsi.org

A recent systematic review and meta-analysis summarised the current evidence on the diagnostic accuracy and value of DL in a variety of medical imaging modalities, showing achievements in disease classification in respiratory medicine, ophthalmology, and breast cancer surgery, the fields with the largest number of reported studies. Only few studies compared the diagnostic accuracy of expert human clinicians with the one of the DL algorithms 4. Reliable and objective assessment of AI performance currently lags behind the development of new image processing algorithms. Risk of bias assessment tools and reporting guidelines have emerged to address the variability of reporting quality, mitigate validation issues, and improve the suitability of AI studies for implementation in clinical practice 5, 6.

Development of computer-aided diagnosis systems: challenges and opportunities

To better understand AI-based computer-aided diagnosis systems, one has to look at the details of their development. The four distinct phases – inception, development, validation, and deployment – have their respective challenges, as well as potential opportunities for healthcare stakeholders to influence the development of CAD systems (Figure 1). Close collaboration between healthcare professionals and computer scientists is therefore required to obtain clinically useful AI solutions.

Figure 1: Challenges and opportunities in the development of computer-aided diagnosis (CAD) systems.

Currently, DL algorithms require data annotation, which is a complex and costly task. Most AI models are trained on manually annotated data with labelling of specific structures, such as normal and neoplastic tissue within an organ or different organs in 3D-reconstructed preoperative CT and MRI. AI models learn from these annotated training data and can then automatise such labelling (i.e., model prediction) 7, 8. Improved machine learning techniques such as semi-supervised and self-supervised algorithms remove this burden without compromising performance. Standardised formats for image data storage and transfer, such as DICOM (Digital Imaging and Communications in Medicine), NIfTI (Neuroimaging Informatics Technology Initiative), and OHDSI (Observational Health Data Sciences and Informatics) provide access to the large amounts of data needed for successful machine learning 9-11. In addition, automation of machine learning algorithms (AutoML) and clinical validation (e.g., MedPerf 12) will improve the speed and efficiency of AI development in the near future with clinician involvement.

Standardisation of imaging studies, image analysis, and structured reporting is key to international comparability and collaboration, to reduce interpretation errors and facilitate computer assistance. However, there are discrepancies in global availability and use of referral guidelines, radiology quality and safety programmes, and reporting systems 13. Smart assistance for time-consuming image analysis and structured reporting is very welcome. Two nuclear medicine imaging experts turned to ChatGPT to test its ability to write a radiology report within a few seconds, answer specific technical questions, or make recommendations, and point out its current limitations, namely that convincing-sounding output is not necessarily correct or up-to-date 14.

Integration of AI and imaging into diagnostic imaging and interventional practice

In a recently published survey, the majority of the 149 participating radiologists in training expressed interest in using AI, in collaborating on AI projects, and in integrating AI training into their curriculum 15. In a survey of medical students on diagnostic and interventional radiology (IR), one third saw AI as a threat to radiologists, while overall there was a belief in the future of IR and a call for its more detailed inclusion in the medical curriculum 16. Given the technical improvements and increasingly user-friendly software formats, the involvement of clinicians in training and specialists in the CAD development and validation workflow will have a considerable impact on its adoption.

AI assistance may reduce radiologist workload or enhance clinician performance. An automated AI tool can already differentiate between normal and abnormal X-Rays. With a sensitivity of more than 99% for both abnormal and critical X-rays, autonomous reporting of normal X-rays could free up a considerable amount of radiologist time for other tasks 17. Early and automatic detection of disease in various imaging modalities can go as far as predicting lung cancer risk 1-6 years into the future, as shown with the model Sybil screening low-dose chest CTs running in the background at radiology reading stations, without radiologist annotations or access to clinical data 18. AI-powered software has recently been shown to reduce the number of missed liver metastases in contrast-enhanced CT, which is particularly useful in hard-to-detect small lesions with low contrast 19. Another recent study assessed AI-enhanced identification of focal liver lesions with intraoperative ultrasound used to guide open liver resections 20. Although the accuracy of liver lesion classification with standard abdominal ultrasound was not reached, and there was no differentiation between different lesion types, this proof-of-concept study points towards further research to optimise AI assistance in intraoperative imaging 20. Automatic volumetric reconstruction of diagnostic imaging studies serves to create a patient-specific virtual model, allowing identification of normal, variant and pathological anatomical findings, surgical planning and navigation and thus intraoperative guidance 8, 21-23.

Radiology is currently the most advanced medical specialty in the use of AI applications related to image interpretation for diagnostic imaging performed in the radiology suite. There is a need to transfer the indicators and metrics developed for diagnostic radiology into the operating room (OR) imaging environment, where currently AI support is relatively scarce. Considering the density of data generated by various imaging modalities in ORs, the potential of AI for data management has resulted in the creation of Surgical Data Science 21, 24.

In addition, in the OR the patient is positioned differently from routine imaging, and registration of preoperative imaging to the changed anatomical situation in the OR is challenging due to these positional changes of the target anatomy. Immediate preoperative or intraoperative imaging in the OR is used to align reconstructed routine imaging studies with the surgical position and continuously re-align if necessary for intraoperative adaptive diagnostic support.

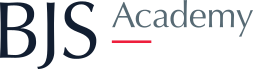

Manufacturers and suppliers of interventional imaging equipment are increasingly providing multimodal integration to complement intraoperative data with information from various preoperative studies, e.g., with segmentations of target anatomy and preoperative planning. In the Hybrid Operating Room (OR) of IHU Strasbourg, jointly built with the support of Siemens Healthineers ( Figure 2), MRI, CT, cone-beam CT/angiography, and ultrasound imaging complement each other in one OR suite 25. AI tools are currently being developed to support precise data matching and image fusion between these modalities. In particular, image fusion approaches enable intraoperative integration of soft tissue contrast and functional imaging provided by the MRI in the adjacent theatre, thus circumventing the material constraints in the OR imposed by a magnetic field. Dynamic respiratory cycle and organ movement data acquired in high speed and high resolution with a CT can be extracted and transferred to the angioCT for needle-guided procedures (gating).

Figure 2. Hybrid Operating Room (OR) of IHU Strasbourg combining MRI, ultrasound, CT and cone-beam CT (Siemens Healthineers, Forchheim, Germany).

Based on the Hybrid OR and infrastructure projects such as the Surgical Control Tower for intraoperative workflow support to increase safety and efficiency, the OR of the future is emerging 24-26.

Although it contains a variety of imaging modalities and other technologies, it is a clutter-free environment focused on information sharing and data analysis, designed to present coordinated and integrated information to each interventional team 27. Interactive screens display patient-specific information and imaging studies, as well as AI-assisted access to relevant information on, for example, international standards and guidelines or a comparison between normal and variant anatomy encountered. It enables interactivity between teams and equipment, fusion of different imaging data (e.g., ultrasound and CT, CT and laparoscopy), simulation and planning of procedures, and real-time documentation and analysis ( Figures 3 and 4).

Figure 3. The next-generation hybrid operating room integrating AI and robotics for diagnostic imaging, procedure planning and execution. The OR of the future is envisioned as the centre of a technology ecosystem. Illustrated technology include advanced interactive digital displays with real-time connectivity and AI analytics, mixed-reality environments, and robotic applications for various interventions, imaging (ultrasound, cone-beam CT, intraoperative CT/MRI, etc.), nursing assistance and sterile instrument management, as well as a predictive logistics supply system with Automatic Guided Vehicles (AGV). (Copyright Barbara Seeliger/ Carlos Amato; Chengyuan Yang; Niloofar Badihi; IHU Strasbourg and Cannon Design USA)

Figure 4. The next-generation command and control room for the hybrid operating room.

The traditional control room is re-envisioned to include miscellaneous robotic system controls for diagnostic and interventional imaging and surgical procedures (surgical robot, scrub nurses and instrument tables, automatic guided vehicles, etc.), interactive screens, advanced procedure guidance (monitoring/planning/analysis) and workflow analysis with real-time AI assistance, simulation with virtual and mixed reality scenarios, connectivity with the surgical simulation and training site to enable observation and multidisciplinary collaboration in videoconferencing or metaverse environments. (Copyright Barbara Seeliger/ Carlos Amato; Chengyuan Yang; Niloofar Badihi; IHU Strasbourg and Cannon Design USA)

Outlook

Medical decision-making goes beyond one data modality at a time, and narrowly defined tasks such as detecting a nodule in one imaging modality. The many complementary sources of information clinicians routinely rely on need to be integrated into healthcare AI applications. Multimodal medical AI 21 will be able to process multiple data sources to reproduce and potentially exceed the kind of data processing currently performed by clinicians when treating and following up their patients, to detect disease early in high-risk individuals or to monitor disease activity over time, and tailor treatment indications to the evolution of lesions over time. Predicting individual treatment outcomes that may require treatment adjustment remains a challenge, and algorithms to improve such predictions, e.g., for radiotherapy 28, are very welcome.

The cost of research and development of AI can be high and affect the retail price of the system, making it potentially costly for healthcare stakeholders. Therefore, identifying a suitable reimbursement system for medical AI is necessary to achieve higher adoption. Data sharing agreements such as the one between Mayo Clinic and Google are a clear sign that tech giants seek to use healthcare data to develop AI technologies, and that health research is counting on advances using AI 29. The creation of large datasets focused on accessibility of information and transparency implies new perspectives as much as it challenges the traditional legal frameworks of data protection with the prohibition of the use of patient data for commercial purposes. The French “Instituts Hospitalo-Universitaires (IHU)”, where academic clinicians and scientists collaborate at the intersection of the public and private sectors, are further candidates to support the medical, technical, and regulatory revolution related to the use of health data research with AI. The broader development of AI algorithms in academic-industry partnerships will go beyond interpreting data separately to linking them and discovering pathophysiological patterns, up to creating digital twins.

Conclusion

The availability of software and hardware computing resources as well as large digitised medical datasets has led to a proliferation of data-driven methods (e.g., deep learning), ushering in an era of AI applications in diagnostic and interventional medical imaging. At this stage, closer collaboration between clinicians and engineers in academic centres and industry is needed to build more clinically relevant, trustworthy AI systems. The development of medical AI systems must continue to be driven by economic benefits and meaningful healthcare outcomes, validated with the rigorous methodology of clinical trials.

References

Murphy EA, Ehrhardt B, Gregson CL, von Arx OA, Hartley A, Whitehouse MR, Thomas MS, Stenhouse G, Chesser TJS, Budd CJ, Gill HS. Machine learning outperforms clinical experts in classification of hip fractures. Sci Rep 2022;12(1): 2058.

Venkat V, Abdelhalim H, DeGroat W, Zeeshan S, Ahmed Z. Investigating genes associated with heart failure, atrial fibrillation, and other cardiovascular diseases, and predicting disease using machine learning techniques for translational research and precision medicine. Genomics 2023;115(2): 110584.

ACR Data Science Institute AI Central.

https://aicentral.acrdsi.org [20/4/2023.

Aggarwal R, Sounderajah V, Martin G, Ting DSW, Karthikesalingam A, King D, Ashrafian H, Darzi A. Diagnostic accuracy of deep learning in medical imaging: a systematic review and meta-analysis. NPJ Digit Med 2021;4(1): 65.

Vasey B, Novak A, Ather S, Ibrahim M, McCulloch P. DECIDE-AI: a new reporting guideline and its relevance to artificial intelligence studies in radiology. Clin Radiol 2023;78(2): 130-136.

Maier-Hein L, Reinke A, Christodoulou E, Glocker B, Godau P, Isensee F, Kleesiek J, Kozubek M, Reyes M, Riegler MA. Metrics reloaded: Pitfalls and recommendations for image analysis validation. arXiv preprint arXiv:220601653 2022.

Seeliger B, Meyer A, Alesina PF, Walz MK, Padoy N, Mutter D. Automatic detection of normal and neoplastic adrenal tissue in computed tomography (CT). In: Abstracts of the 40th workshop of the Surgical Working Group on Endocrinology (CAEK). Die Chirurgie 2022;93(12): 1174-1175.

Seeliger B, Collins T, Marescaux J. Implemented Imaging and Artificial Intelligence. In: The Foundation and Art of Robotic Surgery, Giulianotti PC, Benedetti E, Mangano A (eds). McGraw Hill, 2023.

Wilhelm D, Bouarfa L, Navab N, Meining A, Muller-Stich B, Jarc A, Padoy N. Artificial Intelligence in Visceral Medicine. Visc Med 2020;36(6): 471-475.

Li X, Morgan PS, Ashburner J, Smith J, Rorden C. The first step for neuroimaging data analysis: DICOM to NIfTI conversion. Journal of Neuroscience Methods 2016;264: 47-56.

Hripcsak G, Duke JD, Shah NH, Reich CG, Huser V, Schuemie MJ, Suchard MA, Park RW, Wong IC, Rijnbeek PR, van der Lei J, Pratt N, Noren GN, Li YC, Stang PE, Madigan D, Ryan PB. Observational Health Data Sciences and Informatics (OHDSI): Opportunities for Observational Researchers. Stud Health Technol Inform 2015;216: 574-578.

Karargyris A, Umeton R, Sheller MJ, Aristizabal A, George J, Bala S, Beutel DJ, Bittorf V, Chaudhari A, Chowdhury A. MedPerf: open benchmarking platform for medical artificial intelligence using federated evaluation. arXiv preprint arXiv:211001406 2021.

Mutch CA, Rehani B, Lau LSW. Worldwide Implementation of Radiology Quality and Safety Programs. J Am Coll Radiol 2017;14(11): 1504-1509 e1501.

Buvat I, Weber W. Nuclear Medicine from a Novel Perspective: Buvat and Weber Talk with OpenAI's ChatGPT. J Nucl Med 2023.

Hashmi OU, Chan N, de Vries CF, Gangi A, Jehanli L, Lip G. Artificial intelligence in radiology: trainees want more. Clin Radiol 2023;78(4): e336-e341.

Auloge P, Garnon J, Robinson JM, Dbouk S, Sibilia J, Braun M, Vanpee D, Koch G, Cazzato RL, Gangi A. Interventional radiology and artificial intelligence in radiology: Is it time to enhance the vision of our medical students? Insights Imaging 2020;11(1): 127.

Plesner LL, Muller FC, Nybing JD, Laustrup LC, Rasmussen F, Nielsen OW, Boesen M, Andersen MB. Autonomous Chest Radiograph Reporting Using AI: Estimation of Clinical Impact. Radiology 2023: 222268.

Mikhael PG, Wohlwend J, Yala A, Karstens L, Xiang J, Takigami AK, Bourgouin PP, Chan P, Mrah S, Amayri W, Juan YH, Yang CT, Wan YL, Lin G, Sequist LV, Fintelmann FJ, Barzilay R. Sybil: A Validated Deep Learning Model to Predict Future Lung Cancer Risk From a Single Low-Dose Chest Computed Tomography. J Clin Oncol 2023: JCO2201345.

Nakai H, Sakamoto R, Kakigi T, Coeur C, Isoda H, Nakamoto Y. Artificial intelligence-powered software detected more than half of the liver metastases overlooked by radiologists on contrast-enhanced CT. European Journal of Radiology 2023;163: 110823.

Barash Y, Klang E, Lux A, Konen E, Horesh N, Pery R, Zilka N, Eshkenazy R, Nachmany I, Pencovich N. Artificial intelligence for identification of focal lesions in intraoperative liver ultrasonography. Langenbecks Arch Surg 2022;407(8): 3553-3560.

Maier-Hein L, Eisenmann M, Sarikaya D, Marz K, Collins T, Malpani A, Fallert J, Feussner H, Giannarou S, Mascagni P, Nakawala H, Park A, Pugh C, Stoyanov D, Vedula SS, Cleary K, Fichtinger G, Forestier G, Gibaud B, Grantcharov T, Hashizume M, Heckmann-Notzel D, Kenngott HG, Kikinis R, Mundermann L, Navab N, Onogur S, Ross T, Sznitman R, Taylor RH, Tizabi MD, Wagner M, Hager GD, Neumuth T, Padoy N, Collins J, Gockel I, Goedeke J, Hashimoto DA, Joyeux L, Lam K, Leff DR, Madani A, Marcus HJ, Meireles O, Seitel A, Teber D, Uckert F, Muller-Stich BP, Jannin P, Speidel S. Surgical data science - from concepts toward clinical translation. Med Image Anal 2022;76: 102306.

Park SH, Kim KY, Kim YM, Hyung WJ. Patient-specific virtual three-dimensional surgical navigation for gastric cancer surgery: A prospective study for preoperative planning and intraoperative guidance. Front Oncol 2023;13: 1140175.

Chen Z, Zhang Y, Yan Z, Dong J, Cai W, Ma Y, Jiang J, Dai K, Liang H, He J. Artificial intelligence assisted display in thoracic surgery: development and possibilities. J Thorac Dis 2021;13(12): 6994-7005.

Mascagni P, Padoy N. OR black box and surgical control tower: Recording and streaming data and analytics to improve surgical care. J Visc Surg 2021;158(3S): S18-S25.

Gimenez M, Gallix B, Costamagna G, Vauthey JN, Moche M, Wakabayashi G, Bale R, Swanstrom L, Futterer J, Geller D, Verde JM, Garcia Vazquez A, Boskoski I, Golse N, Muller-Stich B, Dallemagne B, Falkenberg M, Jonas S, Riediger C, Diana M, Kvarnstrom N, Odisio BC, Serra E, Overduin C, Palermo M, Mutter D, Perretta S, Pessaux P, Soler L, Hostettler A, Collins T, Cotin S, Kostrzewa M, Alzaga A, Smith M, Marescaux J. Definitions of Computer-Assisted Surgery and Intervention, Image-Guided Surgery and Intervention, Hybrid Operating Room, and Guidance Systems: Strasbourg International Consensus Study. Ann Surg Open 2020;1(2): e021.

Mascagni P, Longo F, Barberio M, Seeliger B, Agnus V, Saccomandi P, Hostettler A, Marescaux J, Diana M. New intraoperative imaging technologies: Innovating the surgeon's eye toward surgical precision. J Surg Oncol 2018;118(2): 265-282.

Vercauteren T, Unberath M, Padoy N, Navab N. CAI4CAI: The Rise of Contextual Artificial Intelligence in Computer Assisted Interventions. Proc IEEE Inst Electr Electron Eng 2020;108(1): 198-214.

Jalalifar SA, Soliman H, Sahgal A, Sadeghi-Naini A. A Self-Attention-Guided 3D Deep Residual Network With Big Transfer to Predict Local Failure in Brain Metastasis After Radiotherapy Using Multi-Channel MRI. IEEE J Transl Eng Health Med 2023;11: 13-22.

Webster P. Big tech companies invest billions in health research. Nat Med 2023.

.png)

.jpg)